I like to say that “you can’t ignore the physics any more” to point out that we have to worry about lots of physical effects that we never needed to consider. But “you can’t ignore the statistics any more” would be another good rallying cry.

I like to say that “you can’t ignore the physics any more” to point out that we have to worry about lots of physical effects that we never needed to consider. But “you can’t ignore the statistics any more” would be another good rallying cry.

In the design world we like to pretend that the world is pass/fail. If you don’t break the design rules your chip will yield. If your chip timing works at the worst case corner then your chip will yield (yes, you need to look at other corners too).

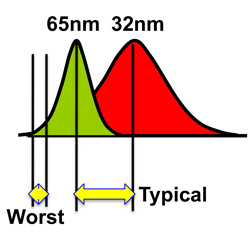

But manufacturing is actually a statistical process and isn’t pass/fail at all. One area that is getting worse with each process generation is process variability especially in power and timing. If we look at a particular number such as the delay through a nand-gate then the difference between worse-case and typical is getting larger. The standard-deviation about the mean is increasing. This means that when we move from one process node to the next, the typical time improves by a certain amount but the worst-case time improves by much less. If we design to worst-case timing we don’t see much of the payback from the investment in the new process.

An additional problem is that we have to worry about variation across the die in a way we could get away with ignoring before. In the days before optical proximity correction (OPC) the variation on a die were pretty much all due to things that affected the whole die: the oxide was slightly too thick, the reticle was slightly out of focus, the metal was slightly over-etched. But with OPC, identical transistors may get patterned differently on the reticle, depending on what else is in the neighborhood. This means that when the stepper is slightly out of focus it will affect identical transistors (from the designer’s point of view) differently.

Treating worst-case timing as an absolutely solid and accurate barrier was always a bit weird. I used to share an office with a guy called Steve Bush who had a memorable image of this. He said that treating worse case timing as accurate to fractions of a picosecond is similar to the way the NFL treats first down. There is a huge pile of players. Somewhere in there is the ball. Eventually people get up and the referee places the ball somewhere roughly reasonable. And then they get out chains and see to fractions of in inch whether it has advanced ten yards or not.

Statistical static timing analysis (SSTA) allows some of this variability to be examined. There is a problem in static timing of handling reconvergent paths well, so that you don’t simultaneously assume that the same gate is both fast and slow. It has to be one or the other, even though you need to worry about both cases.

But there is a more basic issue. The typical die is going to be at a typical process corner. But if we design everything to worst case then we are going to have chips that actually have a much higher performance than necessary. But now that we care a lot about power this is a big problem: they consume more power than necessary giving us all that performance we cannot use. There has always been an issue that the typical chip has performance higher than we guarantee, and when it is important we bin the chips for performance during manufacturing test. But with increased variability the range is getting wider and when power rather than timing is important, too fast is a big problem.

One way to address this is to tweak the power supply voltage to slow down the performance to just what is required, along with a commensurate reduction in power. This is called adaptive voltage scaling (AVS). Usually the voltage is adjusted to take into account the actual process corner, and perhaps even the operating temperature as it changes. Once this is done then it is possible to bin for power as well as performance. Counterintuitively, the chips at the fastest process corner may well be the most power thrifty since we can reduce the supply voltage the most.