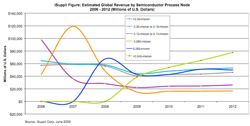

The recent iSuppli report has been getting a lot of coverage (EDN, Wall Street Journal if you have a subscription). It somewhat predicts the end of Moore’s law. If you look at the graph you can see that no process is ever predicted to make as much money at its peak as 90nm but that all the different subsequent process generations live on for a long time as a many-horse race.

The recent iSuppli report has been getting a lot of coverage (EDN, Wall Street Journal if you have a subscription). It somewhat predicts the end of Moore’s law. If you look at the graph you can see that no process is ever predicted to make as much money at its peak as 90nm but that all the different subsequent process generations live on for a long time as a many-horse race.

I’ve often said that Moore’s law is an economic law as much as a technical one. Semiconductor is a mass production technology, and the mass (volume) required to justify it is increasing all the time because the cost of the fabs is going up all the time. This is Moore’s second law: the cost of the fab is also increasing exponentially over time.

So the cost of fabs is increasing exponentially over time and the number of transistors on a chip is increasing exponentially over time. In the past, say the 0.5um to 0.25um transition, the economics were such that the cost per transistor dropped, in this case by about 50%. This meant that even if you didn’t need to do a 0.25um chip, if you were quite happy with 0.5um for area, performance and power, then you still needed to move to 0.25um as fast as possible or else your competitors would have an enormous cost advantage over you.

We are at a different point on those curves now. Consider moving a design from 65nm to 32nm. The performance is better, but not as much as it used to be moving from one process node to another. The power is a bit better, but we can’t reduce the supply voltage enough, so it is not as big a saving as it used to be moving from one node to another and the leakage is probably worse. The cost is less, but only at high enough volumes to amortize the huge engineering cost, so not as much as it used to be. This means that the pressure to move process generation is much less than it used to be and this is showing up in the iSuppli graph as those flattening lines.

Some designs will move to the most advanced process since they have high enough margins, need every bit of performance, every bit of power saving, and manufacture in high enough volume to make the new process cheaper. Microprocessors, graphics chips are obvious candidates.

FPGAs are the ultimate way to aggregate designs that don’t have enough volume to get the advantages of new process nodes. But there is a “valley of death”, where there is no good technology, and it is widening. The valley of death is where volume is too high for an FPGA price to be low enough (say, for some consumer products) but the volume isn’t high enough to justify designing a special chip. Various technologies have tried to step into the valley of death: quick turnaround ASIC like LSI’s RapidChip, FPGAs that can be mass produced with metal mask programming, laser programming, e-beam direct-write. But they all have died in the valley of death too. Canon (steppers) to the left of them, Canon to the right of them, into the valley of death rode the six hundred.

Talking of “The charge of the light brigade,” light and charge are the heart of the problem. Moore’s law involves many technologies but the heart of them all is lithography and the wavelength of light used. With lithography we are running into real physical limitations writing 22nm features with 193nm light, with no good way to build lenses for shorter wavelengths. And on the charge side no good way to speed up ebeam write enough.

So today, the most successful way to live in the valley of death is to use an old process. Design costs are cheap, mask costs are cheap, the fab is depreciated. Much better price per chip than FPGA, better power than FPGA, nowhere near the cost of designing in a state-of-the-art process. For really low volumes, you can never beat an FPGA, for really high volumes you won’t beat the most advanced process, but in the valley of death different processes have their advantages and disadvantages.

However, if we step back a bit and look at “Moore’s law” over an even larger period, we can look at Ray Kurzweil’s graph of computing power growth over time. This is pretty much continuous logarithmic growth for over a century through five different technologies (electromechanical, relay, tube/valve, transistor, integrated circuit). If this logarithmic growth continues then it might turn out to be bad news for semiconductor, just as it was bad news for vacuum tube manufacturers by the 1970s. Something new will come along. Alternatively, it might be something different in the same way as integrated circuits contain transistors but are just manufactured in a way that is orders of magnitude more effective.

However, if we step back a bit and look at “Moore’s law” over an even larger period, we can look at Ray Kurzweil’s graph of computing power growth over time. This is pretty much continuous logarithmic growth for over a century through five different technologies (electromechanical, relay, tube/valve, transistor, integrated circuit). If this logarithmic growth continues then it might turn out to be bad news for semiconductor, just as it was bad news for vacuum tube manufacturers by the 1970s. Something new will come along. Alternatively, it might be something different in the same way as integrated circuits contain transistors but are just manufactured in a way that is orders of magnitude more effective.

We don’t need silicon. We need the capabilities that most recently silicon has delivered as the substrate of choice. On a historical basis, I wouldn’t bet against human ingenuity in this area. Software performance will increase somehow.