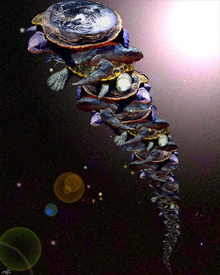

According to Steven Hawking, Bertrand Russell once gave a public lecture on astronomy. He described how the earth orbits around the sun and how the sun, in turn, orbits around the center of a vast collection of stars called our galaxy. At the end of the lecture, a little old lady at the back of the room got up and said: "What you have told us is rubbish. The world is really a flat plate supported on the back of a giant turtle." The scientist gave a superior smile before replying, "What is the turtle standing on?" "You’re very clever, young man," said the old lady. "But it’s turtles all the way down!"

According to Steven Hawking, Bertrand Russell once gave a public lecture on astronomy. He described how the earth orbits around the sun and how the sun, in turn, orbits around the center of a vast collection of stars called our galaxy. At the end of the lecture, a little old lady at the back of the room got up and said: "What you have told us is rubbish. The world is really a flat plate supported on the back of a giant turtle." The scientist gave a superior smile before replying, "What is the turtle standing on?" "You’re very clever, young man," said the old lady. "But it’s turtles all the way down!"

Electronic systems are a bit like that. What is a system depends on who you talk to, and a system to one person is built out of components that are themselves systems to someone else.

In the EDA and semiconductor world we are used to talking about systems-on-chip or SoCs. But the reality is that almost no consumer product consists only of a chip. The closest are probably those remote sensing transport fare-cards like Translink now creeping around the bay area (finally, well over 10 years after Hong Kong’s Octopus card which was probably the first). They are self-contained and don’t even need a battery (they are powered by induction). Even a musical birthday card requires a battery and a speaker along with the chip to make a complete system.

Most SoCs require power supplies, antennas and a circuit board of some sort, plus a human interface of some sort (screen, buttons, microphones, speakers, USB…) to make an end-user product. Nonetheless, a large part of the intelligence and complexity of a consumer product is distilled into the primary SoC inside so it is not a misnomer to refer call them systems.

However, when we talk about ESL (electronic system level) in the context of chip design, we need to be humble and realize that the chip goes into something larger that some other person considers to be the system. Importantly from a business perspective, is that the people at the higher level have very little interest in how the lower level components are designed and it is technically hard to take advantage of in any case. The RTL designer doesn’t care much about how the libary was characterized; the software engineer doesn’t care much about how the language used for the RTL and so on.

At each level some model of the system is required. It seems to be a rule of modeling that it is very difficult to improve (autopmatically) the performance of a model by much more than a factor of 10 or 20 by throwing out detail. Obviously, you can’t do software development on an RTL model of the microprocessor; too slow by far. Less obviously, you can’t create a model on which you can develop software simply by taking the RTL model and reducing its detail and speeding it up. At the next level down, the RTL model itself is not something that can be created simply by crunching the gate-level netlist, which in turn is very different from the circuit simulation model. The process development people model implants and impurities in semiconductors but those models are not much use for analog designers; they contain too much of the wrong type of detail making them too slow.

When I was at Virtutech, Ericsson was a customer and they used (and still do, as far as I know) Virtutech’s products to model 3G base stations, which is what the engineers we interfaced with considered a system. A 3G base station is a cabinet sized box that can contain anything from a dozen up to 60 or so large circuit boards, in total perhaps 800 processors all running their own code. Each base station is actually a unique configuration of boards so each had to be modeled to make sure that that collection of boards operated correctly, which was easiest to do with simulation. Finding all the right boards and cables would take at least a couple of weeks.

I was at a cell-phone conference in the mid-1990s where I talked to a person in a different part of Ericsson. They had a huge business building cell-phone networks all over the world. He did system modeling of some sort to make sure that the correct capacity was in place. To him a system wasn’t a chip, wasn’t even a base-station. It was the complete network of base-stations along with the millions of cell-phones that would be in communication with them. He thought on a completely different scale to most of us.

His major issues were all at the basic flow levels. The type of modeling he did was more like fluid dynamics than anything electronic. The next level down, at the base-station, the biggest problem was getting the software correctly configured for what is, in effect, a hugely complex multi-processor mainframe with a lot of radios attached. Even on an SoC today, more manpower goes into the software than into designing the chip itself.

And most chips are built using an IP-based methodology, some of which is complex enough to call a system in its own right. So it’s pretty much “turtles all the way down”.